Public Private Partnerships in the Age of AGI

During a research fellowship with Convergence Analysis, I co-designed a framework exploring how partnership models may need to evolve as AI reshapes labour markets and economic risk. The central idea is simple: different types of uncertainty require different coordination mechanisms.

You can read the full report here.

AI introduces new coordination failures. Because of key uncertainties and private and public externalities, market signals alone cannot efficiently allocate investment.

These failures manifest as structural underinvestment, particularly in enabling systems such compute, skills, assurance and resilience, where returns have cross-sectoral spillovers and are long‑term.

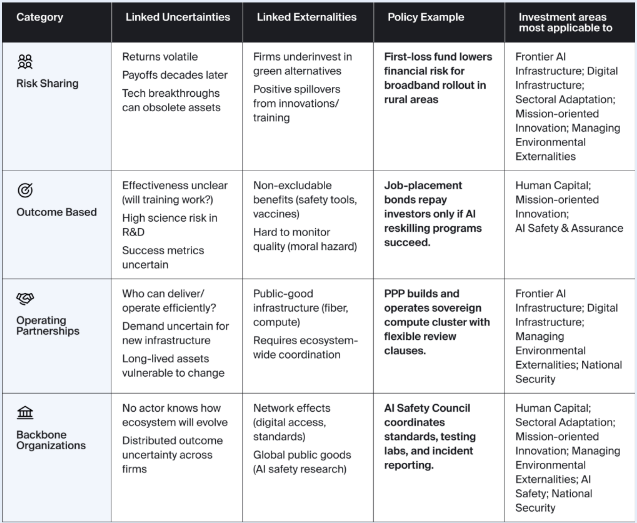

A new generation of public–private partnerships need to be designed to overcome these failures, in the following categories:

risk‑sharing models that distribute uncertainty across actors

outcome‑based partnerships that tie funding to measurable progress

operating partnerships that embed private capabilities within public missions

backbone organizations that coordinate and sustain cross‑sector collaboration

together forming an adaptive architecture for policy evolution in the age of transformative AI

The coordination problem behind AI adoption

Artificial intelligence is often framed as a technology problem, but in reality, it is increasingly a coordination problem too.

When technological change accelerates, the gap between private incentives and social outcomes widens, with the potential for underinvestment in workforce transition, fragmented infrastructure development and delayed responses to systemic risks.

The economic challenge is is both to regulate the development of AI as well as how governments and firms coordinate investment and risk sharing as labour markets evolve.

An uncertainties and externalities framework is useful prism to explore this. AI deployment creates lots of different types of uncertainties: Firms face volatile returns from emerging technologies, governments face uncertainty over labour market impacts and both sides confront investments whose payoffs may only appear years later. At the same time many of the benefits of AI adoption are externalities. Training programmes, safety standards or infrastructure investments often generate value that spills beyond the firm making the investment. When externalities and uncertainty interact, markets tend to underinvest.

The usual response is policy intervention. But traditional tools such as subsidies or regulation can often fail because they do not address the underlying coordination problem.

What is required instead are institutional mechanisms that align incentives between governments and firms under uncertainty.

A framework for partnership design

One way of thinking about this is to match the type of economic uncertainty with the type of partnership mechanism most suited to addressing it.

Our framework below illustrates four broad categories of public–private coordination mechanisms.

Each addresses a different economic failure that emerges when transformative technologies reshape markets.

1 - Risk-sharing mechanisms

Some investments involve long time horizons, volatile returns or technologies that may quickly become obsolete. In these environments firms often delay investment because the downside risks are too large relative to the private return.

Risk-sharing mechanisms address this problem by redistributing early-stage risk.

Examples include first-loss funds, co-investment structures, or government guarantees that reduce the financial exposure of private actors. These mechanisms are particularly relevant for frontier infrastructure and experimental technologies, where uncertainty over future markets is high.

2 - Outcome-based partnerships

In other areas the uncertainty lies predominantly in whether the project will succeed. Training programmes are an illustrative example of this. Governments may subsidise reskilling programmes for istance, but it is often unclear whether they will actually lead to employment. Outcome-based partnerships attempt to solve this by tying financial returns to measurable outcomes.

Mechanisms such as social impact bonds allow investors to fund programmes while governments only pay if results are achieved. This approach shifts incentives toward measurable economic outcomes rather than programme inputs.

3 - Operating Partnerships

Some investments require ongoing coordination rather than one-off financing.

Digital infrastructure, compute capacity, or shared research facilities are examples where long-term operation matters as much as initial construction. In these contexts, operating partnerships such as public–private infrastructure concessions may be more appropriate.

These structures combine public oversight with private operational expertise while allowing contracts to adapt as technologies evolve.

4 - Backbone Organisations

Finally, some areas require ecosystem-level coordination rather than bilateral partnerships.

AI safety standards, shared evaluation infrastructure, and incident reporting systems are examples of problems where no single organisation has sufficient authority or information to coordinate the system.

Backbone organisations can fill this role by convening stakeholders, coordinating standards, and providing shared infrastructure.

In emerging technology sectors, these institutions often act as governance anchors for the broader ecosystem.

Why this matters for Firms

For companies adopting AI, the economic question is not simply which technologies to deploy. It is how adoption interacts with labour markets, regulatory expectations, and broader economic systems.

Firms that move quickly without addressing these coordination challenges may face:

workforce transition bottlenecks

regulatory backlash

fragmented infrastructure development

reputational risks associated with labour displacement

Conversely, firms that engage early with governments and ecosystem partners can shape the institutional frameworks that support sustainable adoption. This often means designing partnership models that align incentives across actors rather than relying solely on internal strategy.

From economic theory to practical strategy

For policymakers, this framework provides a way to think systematically about which partnership structures match different economic risks. For firms, it provides a lens for identifying where collaboration with governments or sector partners may accelerate adoption while reducing long-term risk.

In practice, this kind of analysis often begins with three questions:

Where are the key uncertainties in AI adoption within a sector?

Which externalities are preventing efficient private investment?

Which coordination mechanism best aligns incentives between public and private actors?

Answering those questions requires economic analysis that combines market structure, labour dynamics, and institutional design. As AI continues to reshape industries, the organisations that navigate this transition most effectively will not simply deploy new technologies.

They will design new coordination models for the economy that emerges around them.

Strategic alignment with public missions unlocks growth, as governments increasingly channel AI investment toward priority sectors. Firms that position themselves within these missions gain early access to capital, data, and regulatory clarity.

Hybrid investment vehicles are expanding, creating opportunities for companies to co‑develop infrastructure, standards and assurance frameworks with public partners while sharing risk through outcome‑based models.

Continuous policy adaptation demands active engagement: firms must build internal intelligence to anticipate shifts in AI governance, safety and workforce policy rather than reacting post‑hoc.

Ecosystem positioning is the new competitive edge. Success will hinge on embedding within national AI strategies, cross‑sector alliances, and shared‑risk R&D programs that amplify both influence and resilience.

ABOUT

RG Economics, a limited company in England and Wales under company number 17139920, provides independent economic, quantitative and AI advisory to support high-stakes strategic decisions in dynamic market environments

SUBSCRIBE